Two new experiments present that most individuals do not even consider {that a} private message could possibly be AI-generated, even once they themselves use artificial intelligence to jot down.

To see how individuals choose somebody primarily based on their writing within the age of ChatGPT, my colleague Jiaqi Zhu and I recruited greater than 1,300 U.S.-based contributors, ages 18 to 84, and confirmed them AI-generated messages like an apology despatched in an e mail. We cut up our volunteers into 4 teams: Some individuals noticed the messages with no details about who or what wrote them, as in on a regular basis life. Others have been instructed the messages have been undoubtedly written by a human, undoubtedly AI-generated, or that the supply could possibly be both.

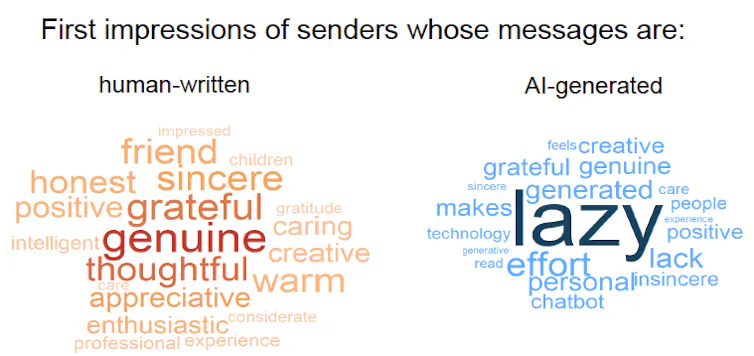

We discovered a transparent “AI disclosure penalty.” When individuals knew a message was AI-generated, they rated the sender rather more negatively (“lazy,” “insincere,” “lack of effort”) than once they believed that the identical textual content was written by an individual (“real,” “grateful,” “considerate”).

However right here’s the twist: The contributors who weren’t instructed something about authorship shaped impressions that have been simply as constructive as these from individuals who have been instructed the messages have been genuinely human.

This entire lack of skepticism shocked us—and it raises new questions. Possibly contributors weren’t acquainted sufficient with AI to comprehend that right this moment’s fashions can produce detailed and private messages. (They can.) Or maybe contributors have by no means used AI themselves. (They likely have.) So we additionally examined whether or not contributors’ personal AI use modified how they judged senders.

To our even larger shock, we discovered little to no impact. Individuals who use generative AI fairly ceaselessly of their each day lives—at the least each different day—did penalize AI use barely much less when AI authorship was disclosed, in contrast with individuals who by no means or not often use AI. However contributors have been no extra skeptical by default: When authorship was not disclosed, heavy AI customers, mild AI customers, and nonusers all tended to imagine the textual content was written by an individual and shaped primarily the identical impressions.

Why it issues

Lack of skepticism and a scarcity of destructive impressions matter as a result of individuals make social judgments from textual content on a regular basis. Recipients contemplate taking the effort and time to ship written messages as an insight into the author’s sincerity, authenticity, or competence, and people impressions form individuals’s choices in friendships, courting, and work.

But our important findings reveal a putting disconnect: Folks often don’t suspect AI use except it is obvious. This unawareness creates an ethical dilemma: Individuals who use AI in secret can get pleasure from the advantages whereas going through virtually no threat of detection. In the meantime, paradoxically, people who find themselves up entrance and admit to utilizing AI suffer a reputational hit.

Over time, a scarcity of skepticism and consciousness may reshape what writing means in on a regular basis life. Readers would possibly be taught to deal with writing as a less reliable sign of somebody’s character or effort, and as a substitute depend on different types of communication. For instance, widespread AI use has already prompted employers to low cost the worth of cover letters from job applicants. As an alternative, they’re relying more on private suggestions from an applicant’s present supervisor or connections made via in-person networking.

What different analysis is being performed

Different researchers have documented a variety of destructive impressions about individuals who disclose their AI use. Research present it makes job candidates appear less desirable and workers appear less competent. Readers of inventive writing understand AI customers as less creative and inauthentic. Folks see personal apologies and corporate apologies that stem from AI as much less efficient. Typically, disclosing AI use decreases trust and undermines legitimacy.

But with out disclosure, there’s clear proof that most individuals cannot reliably detect AI-generated textual content, even with the help of detection tools, particularly when the text is a mix of human-written and AI-generated content material. Even when individuals really feel assured about their potential to identify AI textual content, their confidence could also be nothing greater than a self-affirming illusion.

What’s subsequent

Despite the fact that our experiments didn’t reveal suspicion of AI use, that doesn’t imply individuals by no means suspect it in the actual world. In some settings, individuals could already be hypervigilant about AI. Use in academia is an apparent instance. In our subsequent research, we need to perceive when and why individuals naturally begin to suspect AI use, and what flips the swap between belief and doubt.

Till then, if you need your private message to be judged as heartfelt, the most secure technique could also be to make a telephone name, depart a voicemail, or, higher but, say it in particular person.

The Research Brief is a brief tackle fascinating educational work.

Andras Molnar is an assistant professor of psychology on the University of Michigan.

This text is republished from The Conversation underneath a Artistic Commons license. Learn the original article.